What tracking is

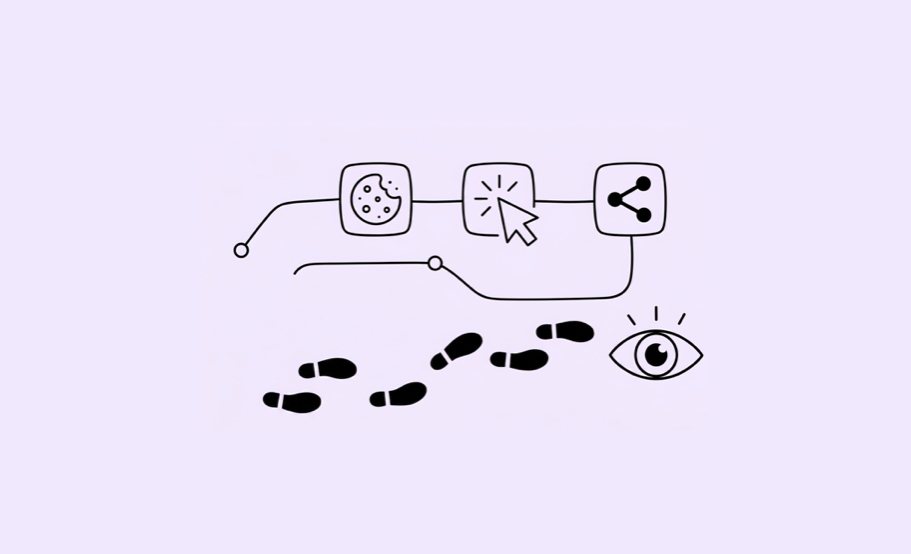

Tracking refers to the set of mechanisms that record user behavior in a digital environment. In other words, it is the system that translates real actions into observable data.

When someone opens an article, scrolls, plays a video, clicks a link, or starts a subscription process, that behavior does not magically appear in a dashboard. There must be prior instrumentation that captures it and turns it into a measurable signal.

Understanding tracking is important because it avoids a very common mistake: thinking that data “is simply there.” In reality, data exists because someone defined what to measure, how to name it, when to record it, and how it will later be interpreted.

Measuring well is not just having an analytics tool. It is having coherent instrumentation.

Tags, events, and parameters

A basic tracking system usually relies on three ideas:

Tags: pieces of code or configurations that activate data collection and send information to the measurement tool.

Events: specific actions that are recorded, such as video playback, link clicks, or reaching the end of an article.

Parameters: additional information attached to the event, such as content name, section, device type, author, topic, or traffic source.

In a newsroom, this is especially useful because it allows us to know not only that “something happened,” but exactly what happened, where, and on which editorial piece.

A simple example: if a user plays a video, the event may be “video_start.” But for that data to be valuable, it should be accompanied by parameters such as video title, duration, section, device, or user source.

What UTMs are

UTMs are parameters added to a URL to identify the origin of a visit. They are widely used in campaigns, newsletters, social media posts, or internal promotions.

Their value is practical: they allow us to know where traffic comes from and compare the performance of different channels. Without well-defined UTMs, part of the acquisition analysis becomes imprecise or even impossible.

The most common problem is not using too few UTMs, but using them without clear criteria. When different teams name campaigns or channels inconsistently, the result is fragmented data. Nearly identical labels appear, campaigns are duplicated, or classifications become unreliable.

For this reason, even in a small organization, it is advisable to establish a clear and shared naming convention.

Data quality

Data quality is a prerequisite for analysis. If data collection is flawed, the analysis will be flawed as well.

Common problems include:

• events firing twice

• content without consistent tagging

• empty or poorly completed fields

• differences between tools that are not understood

• technical changes that alter measurement without documentation

In practice, a newsroom does not need to master the entire measurement architecture, but it should be able to ask basic control questions:

• Are we measuring what we think we are measuring?

• Are definitions stable over time?

• Do the same concepts mean the same thing across all areas?

• Can we compare results without mixing different categories?

A mature data culture often begins with a healthy degree of skepticism toward numbers that appear precise but may not actually be comparable.

What should be measured in text and video

For text content, a reasonable minimum often includes:

• page views or content views

• users

• reading or attention time

• scroll depth or completion

• traffic source

• associated conversions, if applicable

For video, in addition to plays, it is usually useful to measure:

• playback start

• percentage watched

• completion

• average watch time

• early abandonment

• traffic source

The key is not to confuse abundance with usefulness. Not everything needs to be measured with the same level of detail. It is better to prioritize what answers an editorial or business question.

Minimum instrumentation checklist

Before trusting a dashboard, a newsroom should at least be able to verify the following: • each piece of content has clear identifiers

• sections and content types are standardized

• important events are defined

• traffic sources are classified consistently

• there is a shared criterion for conversions

• relevant technical changes are documented

Try it yourself

Your newsroom has shared the following traffic data from the past week. Before you start analysing it, take a look at the UTM labels that came in:

| Source | Campaign | Clicks |

|---|---|---|

| newsletter | weekly-digest | 1840 |

| newsletter | Weekly Digest | 920 |

| newsletter | weekly_digest | 610 |

| newsletter | weekly-digest-jan | 340 |

| social | 2100 | |

| social | 980 | |

| (not set) | (not set) | 4200 |

Consider:

- How much traffic actually came from your newsletter this week? Can you tell with confidence?

- What naming problem do you spot — and how many people do you think caused it?

- What does

(not set)most likely mean, and what does it tell you about your instrumentation? - If you had to write a one-sentence naming convention for your team’s UTMs, what would it say?

This kind of fragmented data is extremely common. Recognising it is the first step to fixing it.