By the end of this lesson, the participant will be able to:

- understand how to analyze performance by author, series, section, or format

- recognize the value and risk of measuring editorial performance at a granular level

- distinguish between quantity metrics and quality metrics

- understand the need for governance and more robust definitions when data is used for recurring decisions

From cross-channel comparison to granular measurement

In Module 2, the focus was on organizing cross-platform measurement and understanding what could be compared across channels. At this level, the next step is to go deeper and analyze performance within the editorial product:

- by author

- by series or editorial franchise

- by section

- by format

- by product or owned channel

This granularity can be very powerful, but also more delicate. The more the analysis is broken down, the more important it is to have consistent definitions and sufficient context.

Measuring by author, format, or series

Analyzing by author or format can help detect:

- engagement patterns

- differences in recurrence

- thematic specialization

- contribution to registration or loyalty

However, it can also lead to poor interpretations if we forget that not all journalists cover equivalent topics, nor do all formats serve the same function. For this reason, granular data must be read with editorial context.

Quantity metrics vs. quality metrics

One of the risks at this level is using indicators that reward only volume. To avoid this, it is important to distinguish between:

- quantity metrics: publications, users, views, production frequency

- quality or value metrics: reading time, recurrence, conversion, satisfaction, depth of consumption, retention

A mature Data Driven culture needs both dimensions, but must avoid letting the former override the latter.

Guardrails and incentives

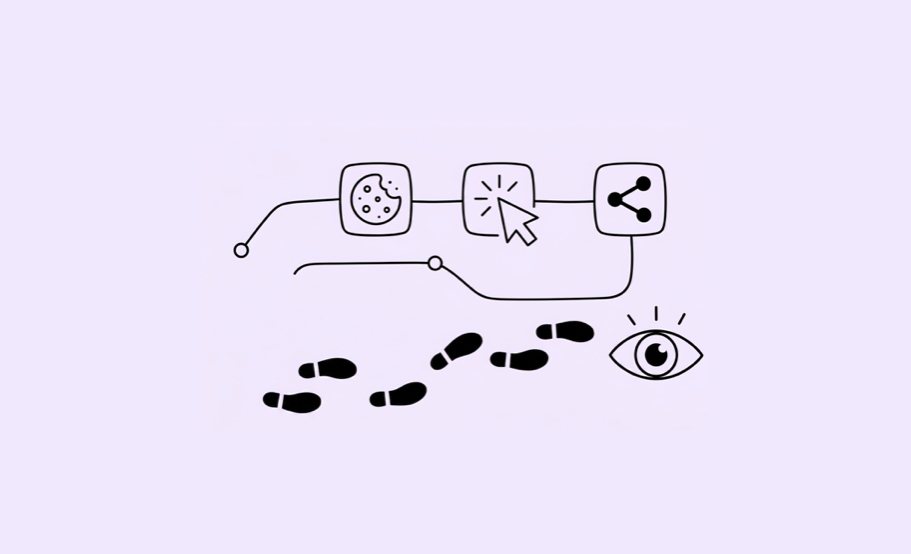

When an organization measures more granularly, it must also consider what behaviors it may be incentivizing. If only volume is rewarded, short-term logic, clickbait, or unbalanced production may be reinforced.

For this reason, it is useful to introduce guardrails—metrics or balancing criteria that prevent simplistic interpretations. For example:

- not evaluating only by traffic, but also by recurrence or conversion

- not comparing authors without considering the type of coverage

- not treating all formats as equivalent

Minimum data governance for recurring decisions

As data becomes part of stable routines, the need for governance grows:

- shared definitions

- more carefully designed taxonomies

- minimal but useful documentation

- responsibility for data quality

- clear rules for interpreting sensitive comparisons

At a Data Driven level, measurement stops being an optional layer and becomes shared infrastructure.

Try it yourself

Your newsroom is rolling out author-level performance tracking for the first time. The data team shares this table with editorial leadership:

| Author | Articles per month | Avg. page views | Avg. reading time | Recirculation rate | Subscriber conversions |

|---|---|---|---|---|---|

| Writer A | 28 | 4,200 | 1:05 | 8% | 2 |

| Writer B | 9 | 18,400 | 7:45 | 34% | 19 |

| Writer C | 22 | 6,100 | 3:20 | 18% | 8 |

| Writer D | 6 | 2,800 | 9:10 | 41% | 14 |

The editor-in-chief glances at the first two columns and says: “Writer A is our most productive journalist. Writer D needs to step it up.”

Consider:

- What is she reading correctly — and what is she missing entirely?

- If your goal is subscription growth, which writer is contributing most? Which single metric makes that clearest?

- What perverse incentive could author-level traffic measurement create for Writer A — and how might it affect editorial quality over time?

- Propose two guardrails you would add before sharing this dashboard with the full team. What would they be, and why?

The more data is used to evaluate people, the more carefully it needs to be framed.